Cognitive computing is an Innovative blend between Human Science and Computer Technology. Cognitive science involves the study of the human brain and how it functions and furthermore, merging these learning with the computer technologies most importantly, -Artificial Intelligence, Natural Language Processing, Coding Algorithms to mimic the way a Human Brain works.

Therefore, the goal of Cognitive Computing is to simulate human thought processes in a computerized model Using self-learning algorithms. Most importantly, the use data mining, speech recognition, vision, dialog recognition, pattern recognition, machine learning and natural language processing. As an end outcome the computer can mimic the way the human brain works.

The end results have far-reaching impacts on our private lives, healthcare, business, and many more.

History and Origins of Cognitive Computing

Modern day Cognitive Computing date back to the late 19th century, with the work of mathematician George Boole and his book The Laws of Thought, and the propositions of Charles Babbage on creating what he termed an “analytical engine.” The term Artificial Intelligence (AI) was coined by the late John McCarthy in 1955 (revised in 2007), when he defined AI as “the science and engineering of making intelligent machines.”

Artificial intelligence has been a far-flung goal of computing since the conception of the computer, but we may be getting closer than ever with new cognitive computing models. While computers have been faster at calculations and processing than humans for decades, they haven’t been able to accomplish tasks that humans take for granted as simple, like understanding natural language, or recognizing unique objects in an image.

The study of AI really began to excel during the 1980s when funding increased considerably over previous decades to develop new technologies into Machine Learning and AI. Then on May 11, 1997 the world’s imagination was captivated when IBM’s Deep Blue beat Garry Kasparov, the current world chess champion. The world of AI research exploded.

- 2005: In 2005, Stanford-built robot wins DARPA Grand Challenge

- 2011: Watson defeats two of the greatest Jeopardy! champions without being hooked to the Internet

Such grand creations have long been the purview of human imagination, but only in the past 30-40 years (with the last decade being of great importance) has the reality of Cognitive Computing started to manifest in our daily affairs.

“Cognitive computing goes well beyond artificial intelligence and human-computer interaction as we know it–it explores the concepts of perception, memory, attention, language, intelligence and consciousness.

Some people say that cognitive computing represents the third era of computing: we went from computers that could tabulate sums (1900s) to programmable systems (1950s), and now to cognitive systems. These cognitive systems, rely on deep learning algorithms and neural networks to process information by comparing it to a teaching set of data. The more data the system is exposed to, the more it learns, and the more accurate it becomes over time, and the neural network is a complex “tree” of decisions the computer can make to arrive at an answer.

What can Cognitive Computing do?

The personal digital assistants we have on our phones and computers now (Siri and Google Assistant among others) are not true cognitive systems; they have a pre-programmed set of responses and can only respond to a preset number of requests. But the time is coming in the near future when we will be able to address our phones, our computers, our cars, or our smart houses and get a real, thoughtful response rather than a pre-programmed one.

As computers become more able to think like human beings, they will also expand our capabilities and knowledge. Just as the heroes of science fiction movies rely on their computers to make accurate predictions, gather data, and draw conclusions, so we will move into an era when computers can augment human knowledge and ingenuity in entirely new ways.

Applications of Cognitive Computing:

There are many possible applications of cognitive computing. It can handle a very minute activity of routine nature to a complex set of tasks involving logical reasoning. Here are some possible applications of cognitive computing in business:

1. Chat-bots:

Chatbots are programs that can simulate a human conversation by understanding the communication in a contextual sense. To make this possible a machine learning technique called natural language processing is used. Natural language processing allows programs to take inputs from humans (voice or text), analyze it and then provide logical answers. Cognitive computing enables chat-bots to have a certain level of intelligence in communication. Like understanding user’s needs based on past communication, giving suggestions, etc.

possible a machine learning technique called natural language processing is used. Natural language processing allows programs to take inputs from humans (voice or text), analyze it and then provide logical answers. Cognitive computing enables chat-bots to have a certain level of intelligence in communication. Like understanding user’s needs based on past communication, giving suggestions, etc.

2. Sentiment analysis:

Sentiment analysis is the science of understanding emotions conveyed in a communication. While it easy for humans to understand tone, intent etc. in a conversation, it is far more complicated for machines. To enable machines to understand human communication you need to feed training data of human conversations and then analyze the accuracy of the analysis. Sentiment analysis is popularly used to analyze social media communications like tweets, comments, reviews, complaints etc.

intent etc. in a conversation, it is far more complicated for machines. To enable machines to understand human communication you need to feed training data of human conversations and then analyze the accuracy of the analysis. Sentiment analysis is popularly used to analyze social media communications like tweets, comments, reviews, complaints etc.

3. Face detection:

Face detection is the advanced level of image analysis. A cognitive system uses data like structure, contours, eye color etc. of the face to differentiate it from others. Once a facial image is generated, it can be used to identify the face from an image or video. While traditionally it used to be done using 2D images now it can also be done using 3D sensors which account for greater accuracy. This can be used in security systems like for a locker or even mobile phone.

differentiate it from others. Once a facial image is generated, it can be used to identify the face from an image or video. While traditionally it used to be done using 2D images now it can also be done using 3D sensors which account for greater accuracy. This can be used in security systems like for a locker or even mobile phone.

4. Risk assessment:

Risk management in financial services involves the analyst going through market trends, historical data etc. to predict the uncertainty involved in an investment. But this is analysis is not only related to data but also on trends, gut feel, behavior analytics etc. Thus it is both an art and a science. Big data analysis (i.e. analysis of past trends alone) is not sufficient to do a risk assessment. Due to the intuition and experience involved in predicting market future, it is necessary to make algorithms intelligent. Cognitive computing helps combine behavioral data and market trends to generate insights. These can then be evaluated by experienced analysts for further analysis and predictions.

involved in an investment. But this is analysis is not only related to data but also on trends, gut feel, behavior analytics etc. Thus it is both an art and a science. Big data analysis (i.e. analysis of past trends alone) is not sufficient to do a risk assessment. Due to the intuition and experience involved in predicting market future, it is necessary to make algorithms intelligent. Cognitive computing helps combine behavioral data and market trends to generate insights. These can then be evaluated by experienced analysts for further analysis and predictions.

5. Fraud detection:

Fraud detection is another application of cognitive computing in finance. It is basically a type of anomaly detection. The goal of fraud detection is to identify transactions which don’t seem to be normal (anomalies). This also requires programs to analyze past data to understand the parameters to be used for judging a transaction. A range of data analysis techniques like Logistic regression, Decision tree, Random Forest, Clustering etc. can be used to detect anomalies.

detection is to identify transactions which don’t seem to be normal (anomalies). This also requires programs to analyze past data to understand the parameters to be used for judging a transaction. A range of data analysis techniques like Logistic regression, Decision tree, Random Forest, Clustering etc. can be used to detect anomalies.

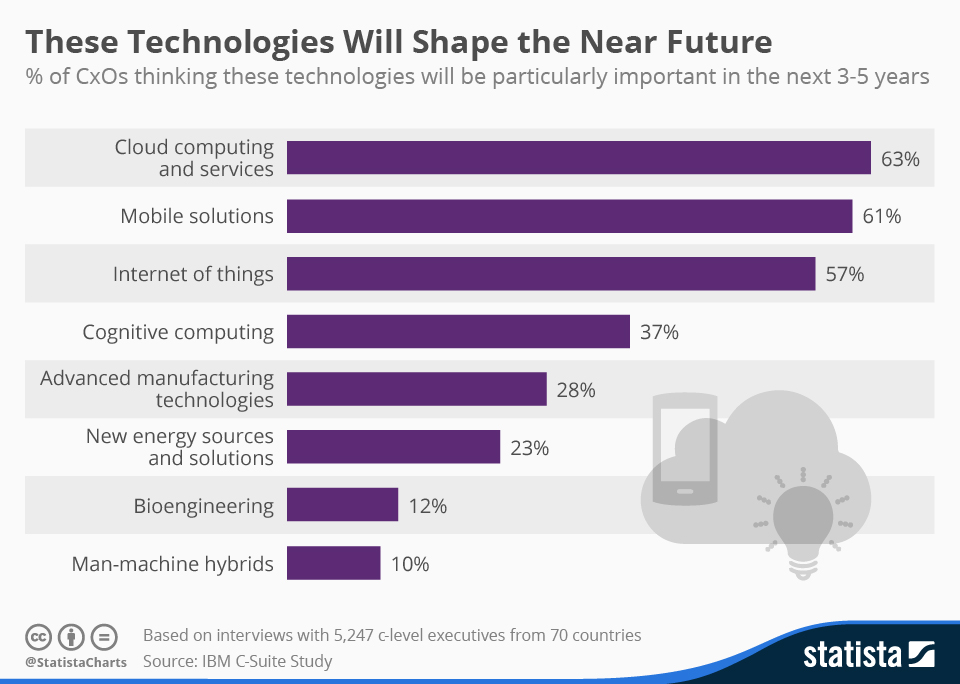

Future Trends into Cognitive Computing

Cognitive Computing will be the Next Generation computing systems which will converse in Human Language. Globally this market is expected to grow at a CAGR of 34.2% and estimated to reach $36,000 Million by 2023.

Global Markets in Cognitive Computing for future will primarily be driven by Natural Language Processing, Machine Learning, Reasoning and Information Retrieval. However, core opportunities will include:

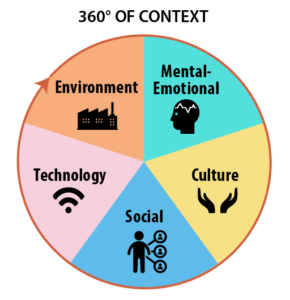

Contextual AnalyticsA contextual analysis is simply an analysis of a text (in whatever medium, including multi-media) that helps us to assess that text within the context of its historical and cultural setting, but also in terms of its textuality – or the qualities that characterize the text as a text. A contextual analysis combines features of formal analysis with features of “cultural archeology, ” or the systematic study of social, political, economic, philosophical, religious, and aesthetic conditions that were (or can be assumed to have been) in place at the time and place when the text was created. While this may sound complicated, it is in reality deceptively simple: it means “situating” the text within the milieu of its times and assessing the roles of author, readers (intended and actual), and “commentators” (critics, both professional and otherwise) in the reception of the text.

context of its historical and cultural setting, but also in terms of its textuality – or the qualities that characterize the text as a text. A contextual analysis combines features of formal analysis with features of “cultural archeology, ” or the systematic study of social, political, economic, philosophical, religious, and aesthetic conditions that were (or can be assumed to have been) in place at the time and place when the text was created. While this may sound complicated, it is in reality deceptively simple: it means “situating” the text within the milieu of its times and assessing the roles of author, readers (intended and actual), and “commentators” (critics, both professional and otherwise) in the reception of the text.

A contextual analysis can proceed along many lines, depending upon how complex one wishes to make the analysis. But it generally includes several key questions:

1. What does the text reveal about itself as a text?

– Describe (or characterize) the language ( the words, or vocabulary) and the rhetoric (how the words are arranged in order to achieve some purpose). These are the primary components of style.

2. What does the text tell us about its apparent intended audience(s)?

– What sort of reader does the author seem to have envisioned, as demonstrated by the text’s language and rhetoric? – What sort of qualifications does the text appear to require of its intended reader(s)? How can we tell? – What sort of readers appear to be excluded from the text’s intended audiences? How can we tell? – Is there, perhaps, more than one intended audience?

3. What seems to have been the author’s intention? Why did the author write this text? And why did the author write this text in this particular way, as opposed to other ways in which the text might have been written?

– Remember that any text is the result of deliberate decisions by the author. The author has chosen to write (or paint, or whatever) with these particular words and has therefore chosen not to use other words that she or he might have used. So we need to consider: – what the author said (the words that have been selected); – what the author did not say (the words that were not selected); and – how the author said it (as opposed to other ways it might or could have been said).

4. What is the occasion for this text? That is, is it written in response to:

– some particular, specific contemporary incident or event? – some more “general” observation by the author about human affairs and/or experiences? – some definable set of cultural circumstances?

5. Is the text intended as some sort of call to – or for – action?

– If so, by whom? And why? – And also if so, what action(s) does the author want the reader(s) to take?

6. Is the text intended rather as some sort of call to – or for – reflection or consideration rather than direct action?

– If so, what does the author seem to wish the reader to think about and to conclude or decide? – Why does the author wish the readers to do this? What is to be gained, and by whom?

7. Can we identify any non-textual circumstances that affected the creation and reception of the text?

– Such circumstances include historical or political events, economic factors, cultural practices, and intellectual or aesthtic issues, as well as the particular circumstances of the author's own life.

Sensor Generated DataSensor data is the output of a device that detects and responds to some type of input from the physical environment. The output may be used to provide information or input to another system or to guide a process. Sensors can be used to detect just about any physical element. Here are a few examples of sensors, just to give an idea of the number and diversity of their applications

Wireless sensor networks combine specialized transducers with a communications infrastructure for monitoring and recording conditions at diverse locations. Commonly monitored parameters include temperature, humidity, pressure, wind direction and speed, illumination intensity, vibration intensity, sound intensity, power-line voltage, chemical concentrations, pollutant levels and vital body functions.

Sensor data is in integral component of the increasing reality of the Internet of Things (IoT) environment. In the IoT scenario, almost any entity imaginable can be outfitted with a unique identifier (UID) and the capacity to transfer data over a network. Much of the data transmitted is sensor data. The huge volume of data produced and transmitted from sensing devices can provide a lot of information but is often considered the next big data challenge for businesses. To deal with that challenge, sensor data analytics is a growing field of endeavor.

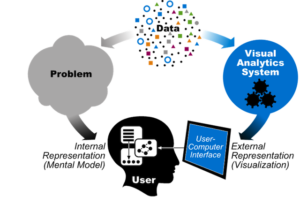

Cognitive VisualizationsThe use of visual models such as pictures, diagrams and animations is increasing. This is because of the complex nature associated with the  concepts . The role of visual literacy in the construction of knowledge is the theoretical process of visualization answering to the question “how can visual literacy be understood based on the theoretical cognitive process of visualization." Based on various theories on cognitive processes the theoretical process of visualization consists of three stages, namely, Internalization of Visual Models, Conceptualization of Visual Models and Externalization of Visual Models.

concepts . The role of visual literacy in the construction of knowledge is the theoretical process of visualization answering to the question “how can visual literacy be understood based on the theoretical cognitive process of visualization." Based on various theories on cognitive processes the theoretical process of visualization consists of three stages, namely, Internalization of Visual Models, Conceptualization of Visual Models and Externalization of Visual Models.

To understand the theoretical cognitive process of visualization - Read our article on "Data Visualization: Visualizing Big Data through Augmented Reality".

Influential SAAS Models

Software as a service is a software licensing and delivery model in which software is licensed on a subscription basis and is centrally hosted. It is sometimes referred to as "on-demand software", and was formerly referred to as "software plus services" by Microsoft. SaaS is typically accessed by users using a thin client via a web browser. According to a Gartner Group estimate, SaaS sales in 2010 reached $10 billion.

SaaS applications are also known as Web-based software, on-demand software and hosted software. The term "Software as a Service" (SaaS) is considered to be part of the nomenclature of cloud computing, along with Infrastructure as a Service (IaaS), Platform as a Service (PaaS), Desktop as a Service (DaaS),[11]managed software as a service (MSaaS), mobile backend as a service (MBaaS), and information technology management as a service (ITMaaS).

Although not all software-as-a-service applications share all traits, the characteristics below are common among many SaaS applications:

- Configuration and customization

- Accelerated feature delivery

- Open integration protocols

Forbes - What everyon needs to know about Cognitive Computing: https://www.forbes.com/sites/bernardmarr/2016/03/23/what-everyone-should-know-about-cognitive-computing/#1982e82c5088 Future of Cognitive Computing: https://www.newgenapps.com/blog/what-is-cognitive-computing-applications-companies-artificial-intelligence Sensor Generated Data - https://internetofthingsagenda.techtarget.com/definition/sensor-data

Leave a Reply