Neural networks are computer programming paradigm which helps computational learning easy from observational datasets. It is a programming concept inspired by the biological sciences. It is the core computer technology which provides the best solutions to many problems in image recognition, speech recognition, and natural language processing.

In a neural network, the programmers don't tell the computer how to solve our problem. Instead, it learns from observational data, figuring out its own solution to the problem at hand.

The Biological Inspiration:The brain is principally composed of about 10 billion neurons, each connected to about 10,000 other neurons. Each neuron receives electro-chemical inputs from other neurons at the dendrites. If the sum of these electrical inputs is sufficiently powerful to activate the neuron, it transmits an electro-chemical signal along the axon, and passes this signal to the other neurons whose dendrites are attached at any of the axon terminals. These attached neurons may then fire.

So, our entire brain is composed of these interconnected electro-chemical transmitting neurons. From a very large number of extremely simple processing units (each performing a weighted sum of its inputs, and then firing a binary signal if the total input exceeds a certain level) the brain manages to perform extremely complex tasks.

This is the model on which artificial neural networks are based. Thus far, artificial neural networks haven't even come close to modeling the complexity of the brain, but they have shown to be good at problems which are easy for a human but difficult for a traditional computer, such as image recognition and predictions based on past knowledge.

History of neural networks:Biological advancements allowed researchers to study the human brain to a very minimal level. It was in 1943 neurophysiologist Warren McCulloch and mathematician Walter Pitts, published their research on neurons (the smallest part of the brain) and their importance in the functionality of the brain. They also modeled the same using simple electrical circuits. Though there were some other advancements in the research of the computing and biological sciences.

It was in 1950's, finally possible to simulate a hypothetical neural network. The first step towards this was made by Nathanial Rochester from the IBM research laboratories. Unfortunately for him, the first attempt to do so failed.

In 1960's research was published proposing that there could not be an extension from the single-layered neural network to multiple layered neural networks. The early successes of some neural networks led to an exaggeration of the potential of neural networks, especially considering the practical technology at the time. Promises went unfulfilled, and at times greater philosophical questions led to fear.

Finally, the first multilayered network was developed in 1975 and the 1980's were the years where they were actively adapted and developed for implementations.

The fundamental idea behind the nature of neural networks is that if it works in nature, it must be able to work in computers. The future of neural networks, though, lies in the development of hardware. Much like the advanced chess-playing machines like Deep Blue, fast, efficient neural networks depend on the hardware being specified for its eventual use.

Neural Network & Architecture

Neural network research is motivated by two desires: to obtain a better understanding of the human brain, and to develop computers that can deal with abstract and poorly defined problems. For example, conventional computers have trouble understanding speech and recognizing people's faces. In comparison, humans do extremely well at these tasks. Many different neural network structures have been tried, some based on imitating what a biologist sees under the microscope, some based on a more mathematical analysis of the problem.

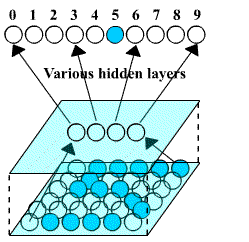

A neural network is formed in three layers, called the input layer, hidden layer, and output layer. Each layer consists of one or more nodes, represented in this diagram by the small circles. The lines between the nodes indicate the flow of information from one node to the next. In this particular type of neural network, the information flows only from the input to the output (that is, from left-to-right). Other types of neural networks have more intricate connections, such as feedback paths. the below image shows us the structure of a widely used neural network.

Each value from the input layer is duplicated and sent to all of the hidden nodes. This is called a fully interconnected structure. The values entering a hidden node are multiplied by weights, a set of predetermined numbers stored in the program. The weighted inputs are then added to produce a single number. The active nodes of the output layer combine and modify the data to produce the two output values of this network. Neural networks can have any number of layers, and any number of nodes per layer. Most applications use the three-layer structure with a maximum of a few hundred input nodes. The hidden layer is usually about 10% the size of the input layer. In the case of target detection, the output layer only needs a single node. The output of this node is thresholded to provide a positive or negative indication of the target's presence or absence in the input data.

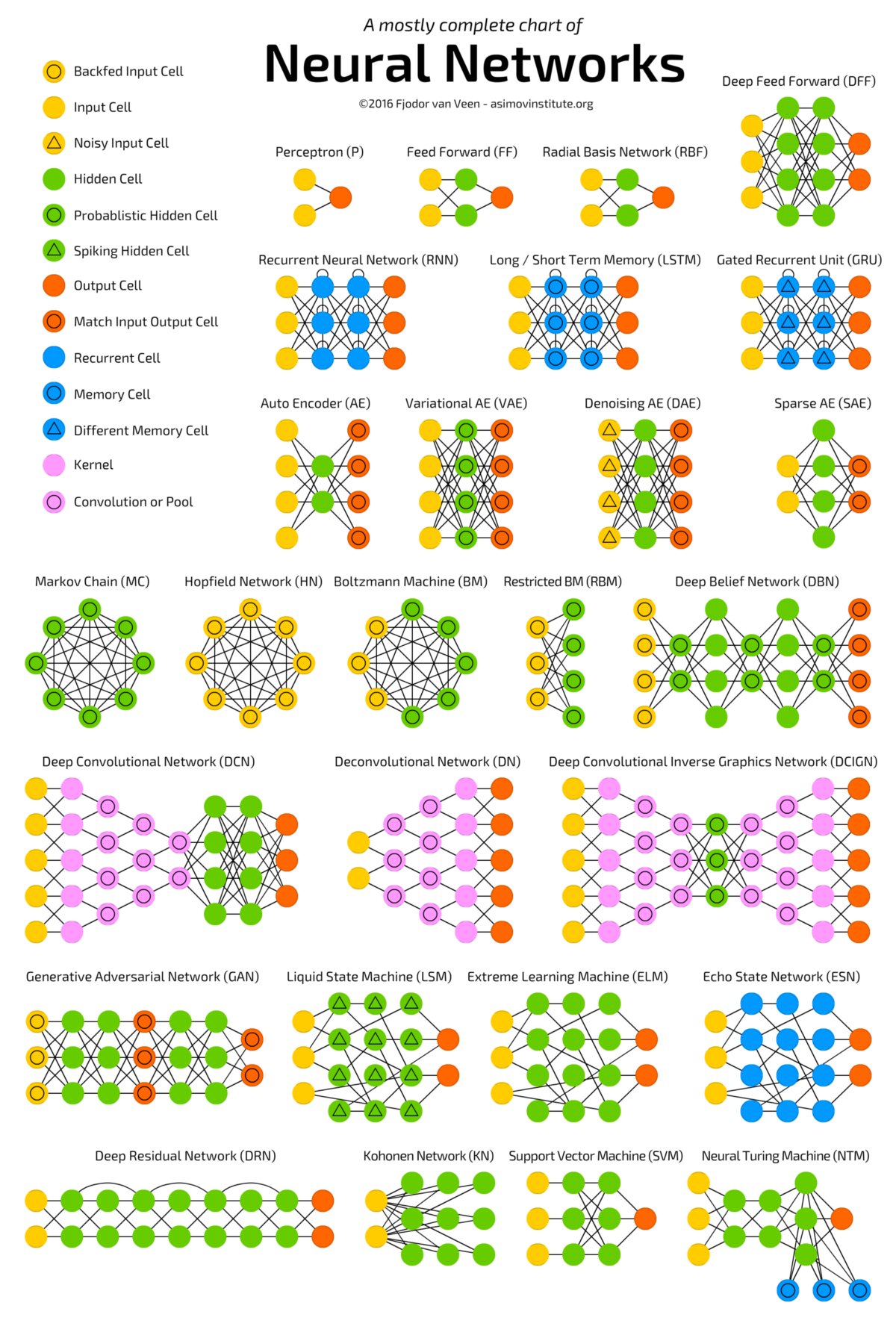

Below is a Holistic Neural Networks Chart for reader's reference. Source:

The four major uses of neural networks are

Classification: Dividing an n-dimensional space into various regions and given a point in the space one should tell which region to which it belongs. This idea is used in many real-world applications, for instance, in various pattern recognition programs. Each pattern is transformed into a multi-dimensional point and is classified to a certain group, each of which represents a known pattern.

Prediction: A neural network can be trained to produce outputs that are expected given a particular input. If we have a network that fits well in modeling a known sequence of values, one can use it to predict future results. An obvious example is the stock market prediction.

Clustering: Sometimes we have to analyze data that are so complicated there is no obvious way to classify them into different categories. Neural networks can be used to identify special features of these data and classify them into different categories without prior knowledge of the data. This technique is useful in data-mining for both commercial and scientific uses.

Association: A neural network can be trained to "remember" a number of patterns, so that when a distorted version of a particular pattern is presented, the network associates it with the closest one in its memory and returns the original version of that particular pattern. This is useful for restoring noisy data.

Applications of Neural Networks

Character Recognition -

Character recognition has been an important and useful application as handheld devices like smartphones are used all over the world for many day to day activities. Neural networks can be used to recognize handwritten characters. ">Google Pixel’s on the fly translations a good example of such technology.

Image Compression -

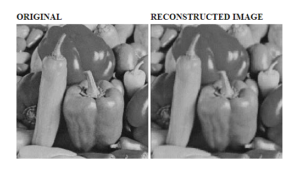

Neural networks can receive and process vast amounts of information at once, making them useful in image compression. Using neural networks for image compression is worth a look. The image is compressed from 64 pixels * 8 bits each = 512 bits to 16 hidden values * 3 bits each = 48 bits, the compressed image is about 1/10th the size of the original.

Stock Market Prediction -

The day-to-day business of the stock market is extremely complicated. Many factors weigh in whether a given stock will go up or down on any given day. Since neural networks can examine a lot of information quickly and sort it all out. They have been touted as all-powerful tools in stock-market prediction.

Medicine -Neural networks work in this area or other areas of medical diagnosis is by the comparison of many different models. A patient may have  regular checkups in an area, increasing the possibility of detecting a disease or dysfunction. The data may include heart rate, blood pressure, breathing rate, etc. to different models. The models may include variations for age, sex, and level of physical activity.

regular checkups in an area, increasing the possibility of detecting a disease or dysfunction. The data may include heart rate, blood pressure, breathing rate, etc. to different models. The models may include variations for age, sex, and level of physical activity.

Everyone’s physiological data is compared to previous physiological data and/or data of the various generic models. The deviations from the norm are compared to the known causes of deviations for each medical condition. The neural network can learn by studying the different conditions and models, merging them to form a complete conceptual picture, and then diagnose a patient's condition based upon the models.

This is just a general picture of what neural networks can do in real life. There are many creative uses of neural networks that arise from these general applications.

About the Author: Ananth Iyer The writer of this article is a Ph.D. Student & Graduate Teaching Assistant for the Department Of Computer Science at The University of North Dakota. Sources:[1] https://cs.stanford.edu/people/eroberts/courses/soco/projects/neural-networks/index.html [2] https://www.dspguide.com/ch26/2.htm [3] http://neuralnetworksanddeeplearning.com/index.html [4] https://towardsdatascience.com/the-mostly-complete-chart-of-neural-networks-explained-3fb6f2367464 [5] https://www.semanticscholar.org/paper/Stock-Market-Prediction-using-Artificial-Neural-.-1-Grigoryan/c94eb05c1501e69d0524d224d4c8edbde2404059/figure/4

Leave a Reply