5 Views

When searching for the best assignment help service, quality should be a top priority. It'

17 Views

The global asteroid mining market size reached US$ 1.34 Billion in 2021. Looking forward,

22 Views

The demand of global Payment Orchestration Platform Market size & share was valued at ap

25 Views

Drug distribution typically refers to the movement of the drug to and from the blood to su

298 Views

contracts and agreements play a vital role in various industries and sectors. Proper manag

331 Views

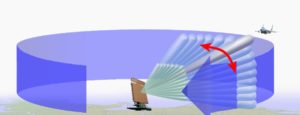

Signals Intelligence (SIGINT) Market size is projected to grow from USD 14.0 billion in 20

412 Views

5G Satellite Communication Market Insights, Latest Trends Future and Forecast 2028

376 Views

Virtual Payment Systems Market 2022, provides information on regional and global markets

338 Views

Global Soft Wall Military Shelter Market research report to give the knowledge of all the

1347 Views

Pipeline Network Market size to grow from USD 8.5 billion in 2019 to USD 12.3 billion by 2

538 Views

Emergen Research has recently published a new report on the Global Patient Access Front e

494 Views

Global Big Data Analytics in Manufacturing Market’, can be considered a profound analys

345 Views

Reduced payments due to lower interest rates from certified asset transfers may immediatel

991 Views

Global Big Data in Healthcare Market, can be considered a profound analysis of the global

321 Views

The T cell is the main building block of the immune system. This blog discusses the develo

426 Views

The Middle East & Africa Smart Water Management Market FY22-FY26 research report is a s

465 Views

The Global Plant-Based Food & Beverages Alternatives Market Research Report is a detaile

428 Views

The latest study released on the Global Robotic Vision Market size, trend, and forecast

548 Views

India is the second-most populated nation in the world and a significant crop producer. Th

481 Views

The cultivator was invented in 1912 as a rotary cultivator tractor tool by Arthur Clifford

555 Views

Eating right food is important for Everyone. However, this can feel like an unsurmountable

2392 Views

DNS – Domain Name System is like a telephone directory for the internet, consisting of t

512 Views

A detailed overview of the main market provides insight into the changing market dynamics

635 Views

Detailed overview and changing market dynamics of Fixed-Line Broadband Access Equipments i

758 Views

Medical Power Supply Devices Market 2022, provides information on regional and global mar

766 Views

The Global Infectious Disease Testing Instrumentation Market research report to give the

567 Views

An approach is commonly known as Behavior Driven Development (BDD) - In order to get bet

1951 Views

Tax Services Market is expected to grow at a Compound Annual Growth Rate (CAGR) of 11.5%

808 Views

5G Digital Cellular Networks Market, Global Outlook and Forecast 2022-2030 is latest resea